Premium Only Content

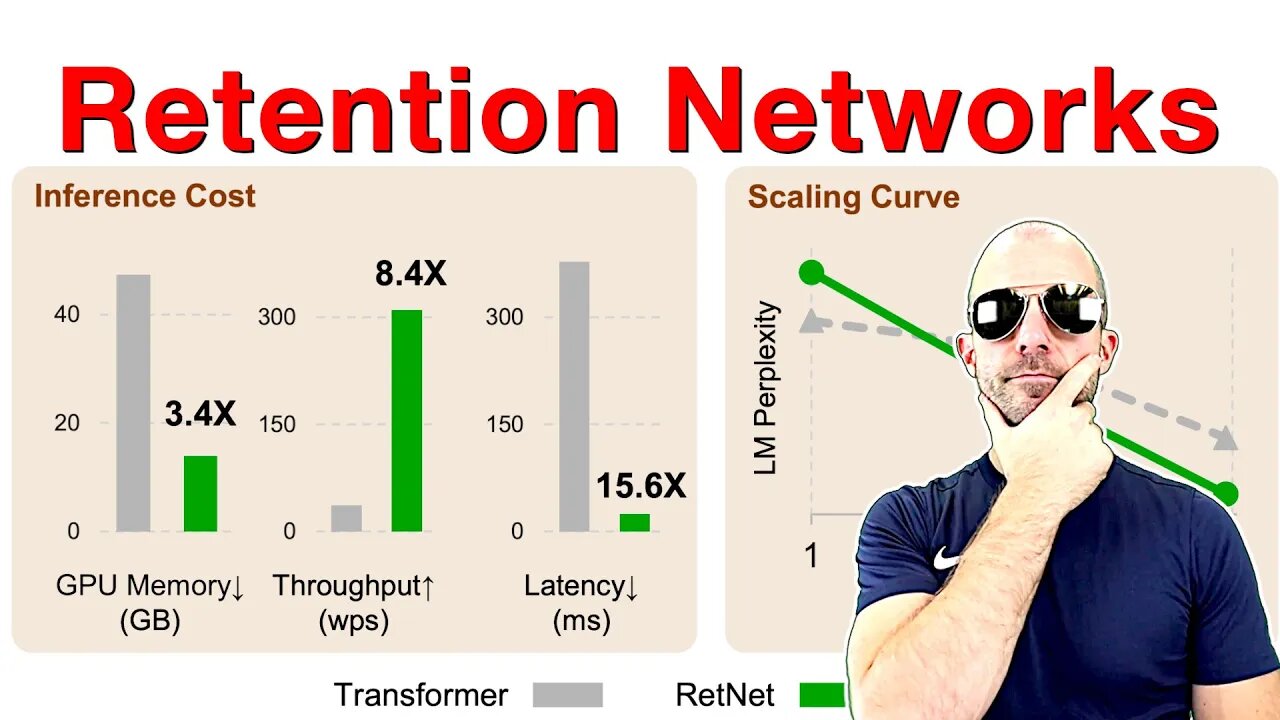

Retentive Network: A Successor to Transformer for Large Language Models (Paper Explained)

#ai #retnet #transformers

Retention is an alternative to Attention in Transformers that can both be written in a parallel and in a recurrent fashion. This means the architecture achieves training parallelism while maintaining low-cost inference. Experiments in the paper look very promising.

OUTLINE:

0:00 - Intro

2:40 - The impossible triangle

6:55 - Parallel vs sequential

15:35 - Retention mechanism

21:00 - Chunkwise and multi-scale retention

24:10 - Comparison to other architectures

26:30 - Experimental evaluation

Paper: https://arxiv.org/abs/2307.08621

Abstract:

In this work, we propose Retentive Network (RetNet) as a foundation architecture for large language models, simultaneously achieving training parallelism, low-cost inference, and good performance. We theoretically derive the connection between recurrence and attention. Then we propose the retention mechanism for sequence modeling, which supports three computation paradigms, i.e., parallel, recurrent, and chunkwise recurrent. Specifically, the parallel representation allows for training parallelism. The recurrent representation enables low-cost O(1) inference, which improves decoding throughput, latency, and GPU memory without sacrificing performance. The chunkwise recurrent representation facilitates efficient long-sequence modeling with linear complexity, where each chunk is encoded parallelly while recurrently summarizing the chunks. Experimental results on language modeling show that RetNet achieves favorable scaling results, parallel training, low-cost deployment, and efficient inference. The intriguing properties make RetNet a strong successor to Transformer for large language models. Code will be available at this https URL.

Authors: Yutao Sun, Li Dong, Shaohan Huang, Shuming Ma, Yuqing Xia, Jilong Xue, Jianyong Wang, Furu Wei

Links:

Homepage: https://ykilcher.com

Merch: https://ykilcher.com/merch

YouTube: https://www.youtube.com/c/yannickilcher

Twitter: https://twitter.com/ykilcher

Discord: https://ykilcher.com/discord

LinkedIn: https://www.linkedin.com/in/ykilcher

If you want to support me, the best thing to do is to share out the content :)

If you want to support me financially (completely optional and voluntary, but a lot of people have asked for this):

SubscribeStar: https://www.subscribestar.com/yannickilcher

Patreon: https://www.patreon.com/yannickilcher

Bitcoin (BTC): bc1q49lsw3q325tr58ygf8sudx2dqfguclvngvy2cq

Ethereum (ETH): 0x7ad3513E3B8f66799f507Aa7874b1B0eBC7F85e2

Litecoin (LTC): LQW2TRyKYetVC8WjFkhpPhtpbDM4Vw7r9m

Monero (XMR): 4ACL8AGrEo5hAir8A9CeVrW8pEauWvnp1WnSDZxW7tziCDLhZAGsgzhRQABDnFy8yuM9fWJDviJPHKRjV4FWt19CJZN9D4n

-

LIVE

LIVE

FreshandFit

1 hour agoAfter Hours With Top G Andrew Tate

15,245 watching -

LIVE

LIVE

Badlands Media

9 hours agoBaseless Conspiracies Ep. 168

5,397 watching -

1:29:33

1:29:33

The Gundie Awards

2 hours ago $5.00 earnedThe 7th Annual Gundies Red Carpet Pre-Show Presented by Eotech

28.5K2 -

3:04:41

3:04:41

TimcastIRL

3 hours agoDOJ CONFIRMS Don Lemon, Leftists, To Face Charges For Storming Church | Timcast IRL

329K169 -

LIVE

LIVE

SpartakusLIVE

7 hours agoSHADOW BANNED || The FALSLY Accused Will Be EXONERATED

108 watching -

3:50:43

3:50:43

Barry Cunningham

3 hours agoThe Game

48.7K67 -

LIVE

LIVE

PandaSub2000

7 days agoLIVE 1/19 @10:30pm ET | METROID PRIME 3: CORRUPTION

126 watching -

LIVE

LIVE

HEXIK

5 hours agoDiablo 2 - TERROR DIVISION - Rumble Stream Event

82 watching -

LIVE

LIVE

WolfLinksShadow

1 hour agoMario Kart Monday!

175 watching -

1:35:40

1:35:40

MattMorseTV

4 hours ago $11.40 earned🔴Absolutely NO ONE expected THIS...🔴

42.6K120