Premium Only Content

ActInf GuestStream 115.1 ~ Energy-Based Transformers and the Future of Scaling

"Energy-Based Transformers are Scalable Learners and Thinkers"

Alexi Gladstone, Ganesh Nanduru, Md Mofijul Islam, Peixuan Han, Hyeonjeong Ha, Aman Chadha, Yilun Du, Heng Ji, Jundong Li, Tariq Iqbal

https://arxiv.org/abs/2507.02092

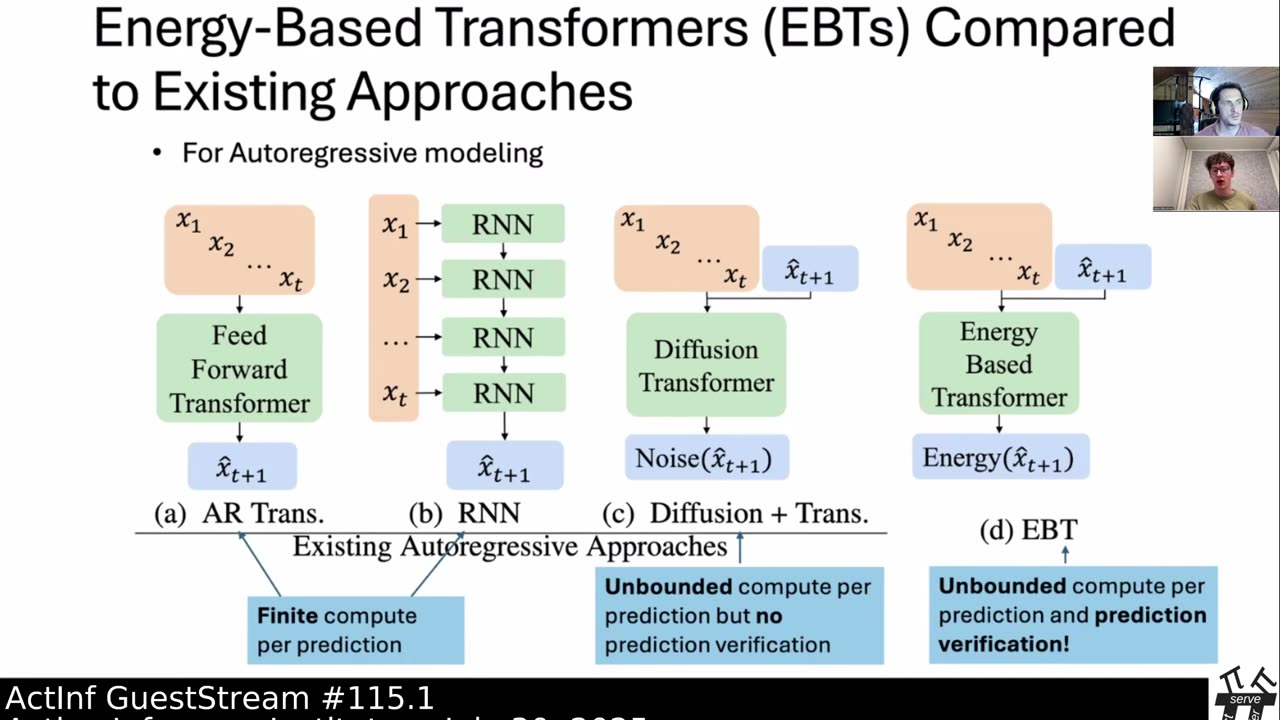

Inference-time computation techniques, analogous to human System 2 Thinking, have recently become popular for improving model performances. However, most existing approaches suffer from several limitations: they are modality-specific (e.g., working only in text), problem-specific (e.g., verifiable domains like math and coding), or require additional supervision/training on top of unsupervised pretraining (e.g., verifiers or verifiable rewards). In this paper, we ask the question "Is it possible to generalize these System 2 Thinking approaches, and develop models that learn to think solely from unsupervised learning?" Interestingly, we find the answer is yes, by learning to explicitly verify the compatibility between inputs and candidate-predictions, and then re-framing prediction problems as optimization with respect to this verifier. Specifically, we train Energy-Based Transformers (EBTs) -- a new class of Energy-Based Models (EBMs) -- to assign an energy value to every input and candidate-prediction pair, enabling predictions through gradient descent-based energy minimization until convergence. Across both discrete (text) and continuous (visual) modalities, we find EBTs scale faster than the dominant Transformer++ approach during training, achieving an up to 35% higher scaling rate with respect to data, batch size, parameters, FLOPs, and depth. During inference, EBTs improve performance with System 2 Thinking by 29% more than the Transformer++ on language tasks, and EBTs outperform Diffusion Transformers on image denoising while using fewer forward passes. Further, we find that EBTs achieve better results than existing models on most downstream tasks given the same or worse pretraining performance, suggesting that EBTs generalize better than existing approaches. Consequently, EBTs are a promising new paradigm for scaling both the learning and thinking capabilities of models.

Subjects: Machine Learning (cs.LG); Artificial Intelligence (cs.AI); Computation and Language (cs.CL); Computer Vision and Pattern Recognition (cs.CV)

Cite as: arXiv:2507.02092 [cs.LG]

(or arXiv:2507.02092v1 [cs.LG] for this version)

https://doi.org/10.48550/arXiv.2507.02092

Active Inference Institute information:

Website: https://www.activeinference.institute/

Activities: https://activities.activeinference.institute/

Discord: https://discord.activeinference.institute/

Donate: http://donate.activeinference.institute/

YouTube: https://www.youtube.com/c/ActiveInference/

X: https://x.com/InferenceActive

Active Inference Livestreams: https://video.activeinference.institute/

-

10:26:08

10:26:08

Active Inference Institute

1 month ago5th Applied Active Inference Symposium (Part 3, Nov 14, 2025) ~ LIVE

6 -

LIVE

LIVE

MattMorseTV

1 hour ago🔴Trump just handed Congress THE EVIDENCE.🔴

2,045 watching -

1:01:54

1:01:54

BonginoReport

2 hours agoVanity Fair Goes Nuclear On Trump’s Inner Circle - Nightly Scroll w/ Hayley Caronia (Ep.198)

98.6K19 -

53:25

53:25

Katie Miller Pod

4 hours ago $0.49 earnedFBI Director Kash Patel & Alexis Wilkins on Balancing Their Relationship with Work | KMP Ep.19

8.83K10 -

1:15:37

1:15:37

Candace Owens

2 hours agoErika And I Sat Down. Here’s What Happened. | Candace Ep 280

123K293 -

LIVE

LIVE

Quite Frankly

5 hours agoEurope Talks War, Old School TV, Open Lines & EXTRAS | J Gulinello | 12/16/25

414 watching -

13:51

13:51

ARFCOM News

4 hours ago $0.09 earnedShould Bystander Have Blasted? + DOJ Lawyers: Don't Make Us Defend Gun Rights! + How To STOP Flock?

5.01K6 -

1:04:59

1:04:59

TheCrucible

3 hours agoThe Extravaganza! EP: 75 (12/16/25)

69.6K6 -

1:13:35

1:13:35

Kim Iversen

2 hours agoTurtle Island Terror: A Narrative That Serves Israel

33.4K54 -

2:04:10

2:04:10

Redacted News

3 hours agoGet Ready! Something Big is Coming and They're Putting all The Pieces in Place | Redacted News

145K132