Premium Only Content

ActInf GuestStream 115.1 ~ Energy-Based Transformers and the Future of Scaling

"Energy-Based Transformers are Scalable Learners and Thinkers"

Alexi Gladstone, Ganesh Nanduru, Md Mofijul Islam, Peixuan Han, Hyeonjeong Ha, Aman Chadha, Yilun Du, Heng Ji, Jundong Li, Tariq Iqbal

https://arxiv.org/abs/2507.02092

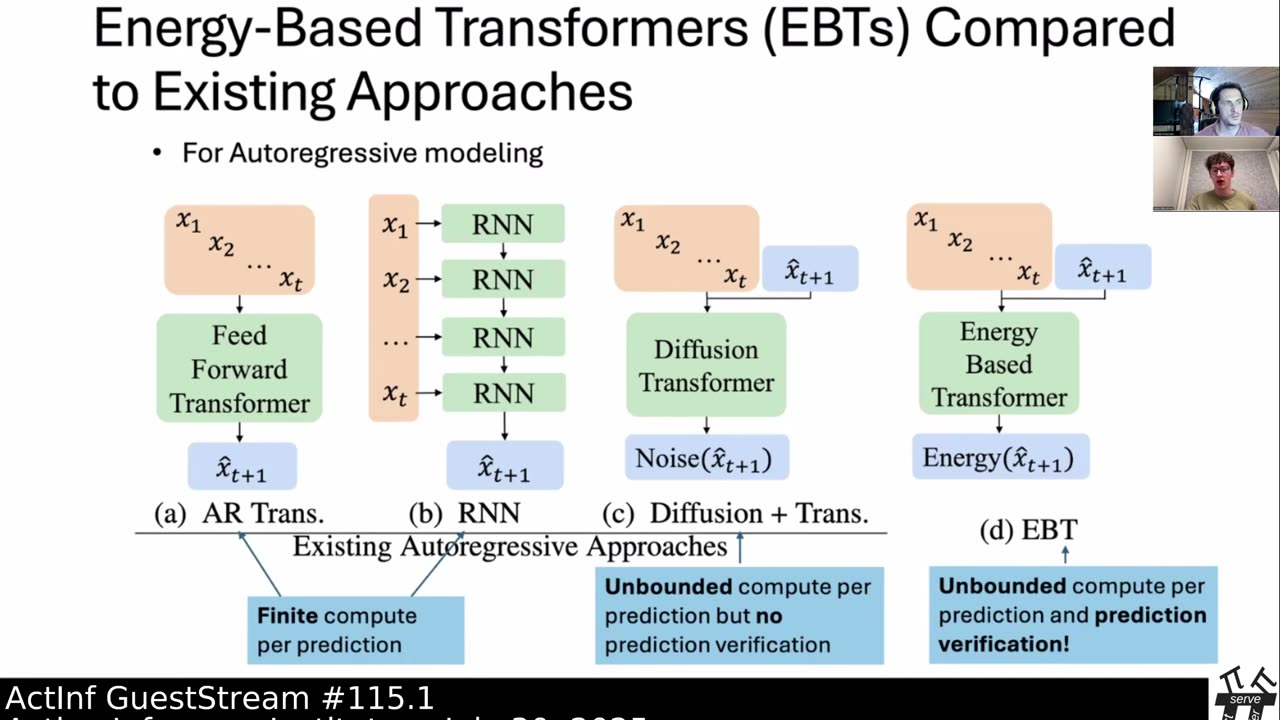

Inference-time computation techniques, analogous to human System 2 Thinking, have recently become popular for improving model performances. However, most existing approaches suffer from several limitations: they are modality-specific (e.g., working only in text), problem-specific (e.g., verifiable domains like math and coding), or require additional supervision/training on top of unsupervised pretraining (e.g., verifiers or verifiable rewards). In this paper, we ask the question "Is it possible to generalize these System 2 Thinking approaches, and develop models that learn to think solely from unsupervised learning?" Interestingly, we find the answer is yes, by learning to explicitly verify the compatibility between inputs and candidate-predictions, and then re-framing prediction problems as optimization with respect to this verifier. Specifically, we train Energy-Based Transformers (EBTs) -- a new class of Energy-Based Models (EBMs) -- to assign an energy value to every input and candidate-prediction pair, enabling predictions through gradient descent-based energy minimization until convergence. Across both discrete (text) and continuous (visual) modalities, we find EBTs scale faster than the dominant Transformer++ approach during training, achieving an up to 35% higher scaling rate with respect to data, batch size, parameters, FLOPs, and depth. During inference, EBTs improve performance with System 2 Thinking by 29% more than the Transformer++ on language tasks, and EBTs outperform Diffusion Transformers on image denoising while using fewer forward passes. Further, we find that EBTs achieve better results than existing models on most downstream tasks given the same or worse pretraining performance, suggesting that EBTs generalize better than existing approaches. Consequently, EBTs are a promising new paradigm for scaling both the learning and thinking capabilities of models.

Subjects: Machine Learning (cs.LG); Artificial Intelligence (cs.AI); Computation and Language (cs.CL); Computer Vision and Pattern Recognition (cs.CV)

Cite as: arXiv:2507.02092 [cs.LG]

(or arXiv:2507.02092v1 [cs.LG] for this version)

https://doi.org/10.48550/arXiv.2507.02092

Active Inference Institute information:

Website: https://www.activeinference.institute/

Activities: https://activities.activeinference.institute/

Discord: https://discord.activeinference.institute/

Donate: http://donate.activeinference.institute/

YouTube: https://www.youtube.com/c/ActiveInference/

X: https://x.com/InferenceActive

Active Inference Livestreams: https://video.activeinference.institute/

-

1:28:07

1:28:07

Active Inference Institute

2 months agoActInf GuestStream 112.1 ~ Brain-like variational inference (Hadi Vafaii)

101 -

1:11:51

1:11:51

Russell Brand

2 hours agoCharlie Kirk Murder Suspect Faces DEATH PENALTY, As Media Creates Love Story Narrative - SF635

48.4K40 -

2:00:54

2:00:54

The Charlie Kirk Show

2 hours agoGlenn Beck Commemorates Charlie Kirk | 9.17.2025

167K112 -

The White House

1 hour agoVice President JD Vance Delivers Remarks on President Trump’s Tax Cuts

7 -

LIVE

LIVE

Dr Disrespect

3 hours ago🔴LIVE - DR DISRESPECT - ARENA BREAKOUT: INFINITE - ESCAPE OR LOSE EVERYTHING!

1,335 watching -

Sean Unpaved

2 hours agoKawhi's Payday Peril, MLB's October Odyssey, & Raleigh's Switch-Hit Throne

5.06K -

1:39:16

1:39:16

Steven Crowder

5 hours agoJimmy Kimmel & The Left Desperately Want to Gaslight America - Don't Let Them

400K371 -

LIVE

LIVE

The Shannon Joy Show

3 hours agoFinal Betrayal: Trump’s FBI Director Kash Patel Declares Jeffrey Epstein WASN’T A Sex Trafficker.

409 watching -

1:35:00

1:35:00

The Mel K Show

3 hours agoMORNINGS WITH MEL K - Who Benefits? Order Out of Chaos & the Hegelian Dialectic 9-17-25

22.5K12 -

24:23

24:23

Standpoint with Gabe Groisman

1 hour agoUS DOJ's Leo Terrell Says “No University Is Prepared For The Crackdown That’s Coming…”

5.35K1