Premium Only Content

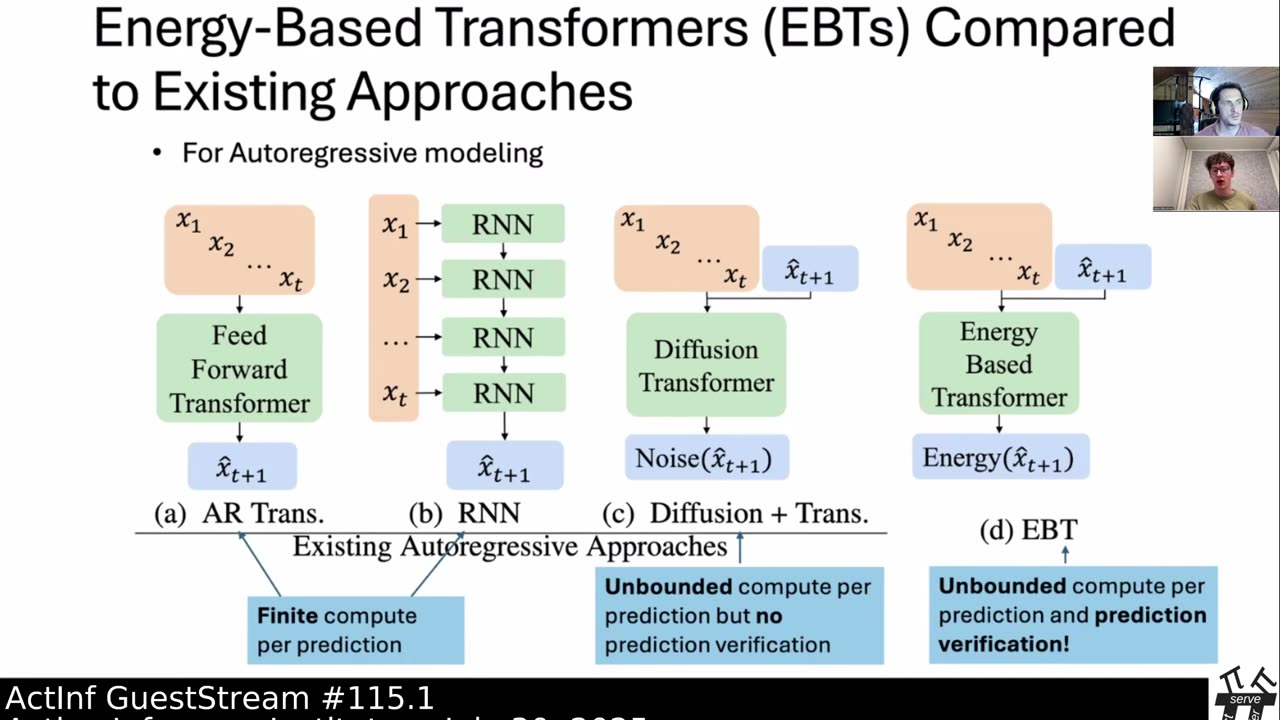

ActInf GuestStream 115.1 ~ Energy-Based Transformers and the Future of Scaling

"Energy-Based Transformers are Scalable Learners and Thinkers"

Alexi Gladstone, Ganesh Nanduru, Md Mofijul Islam, Peixuan Han, Hyeonjeong Ha, Aman Chadha, Yilun Du, Heng Ji, Jundong Li, Tariq Iqbal

https://arxiv.org/abs/2507.02092

Inference-time computation techniques, analogous to human System 2 Thinking, have recently become popular for improving model performances. However, most existing approaches suffer from several limitations: they are modality-specific (e.g., working only in text), problem-specific (e.g., verifiable domains like math and coding), or require additional supervision/training on top of unsupervised pretraining (e.g., verifiers or verifiable rewards). In this paper, we ask the question "Is it possible to generalize these System 2 Thinking approaches, and develop models that learn to think solely from unsupervised learning?" Interestingly, we find the answer is yes, by learning to explicitly verify the compatibility between inputs and candidate-predictions, and then re-framing prediction problems as optimization with respect to this verifier. Specifically, we train Energy-Based Transformers (EBTs) -- a new class of Energy-Based Models (EBMs) -- to assign an energy value to every input and candidate-prediction pair, enabling predictions through gradient descent-based energy minimization until convergence. Across both discrete (text) and continuous (visual) modalities, we find EBTs scale faster than the dominant Transformer++ approach during training, achieving an up to 35% higher scaling rate with respect to data, batch size, parameters, FLOPs, and depth. During inference, EBTs improve performance with System 2 Thinking by 29% more than the Transformer++ on language tasks, and EBTs outperform Diffusion Transformers on image denoising while using fewer forward passes. Further, we find that EBTs achieve better results than existing models on most downstream tasks given the same or worse pretraining performance, suggesting that EBTs generalize better than existing approaches. Consequently, EBTs are a promising new paradigm for scaling both the learning and thinking capabilities of models.

Subjects: Machine Learning (cs.LG); Artificial Intelligence (cs.AI); Computation and Language (cs.CL); Computer Vision and Pattern Recognition (cs.CV)

Cite as: arXiv:2507.02092 [cs.LG]

(or arXiv:2507.02092v1 [cs.LG] for this version)

https://doi.org/10.48550/arXiv.2507.02092

Active Inference Institute information:

Website: https://www.activeinference.institute/

Activities: https://activities.activeinference.institute/

Discord: https://discord.activeinference.institute/

Donate: http://donate.activeinference.institute/

YouTube: https://www.youtube.com/c/ActiveInference/

X: https://x.com/InferenceActive

Active Inference Livestreams: https://video.activeinference.institute/

-

1:08:49

1:08:49

Active Inference Institute

16 days agoActInf GuestStream 082.6 ~ Robert Worden "A Unified Theory of Language"

5 -

2:18:53

2:18:53

Badlands Media

17 hours agoDevolution Power Hour Ep. 403: Brennan Exposed & The Intel War w/ Thomas Speciale

437K106 -

4:34

4:34

Legal Money Moves

5 days agoThe AI Panic: Are You Next?

16.6K9 -

25:41

25:41

Robbi On The Record

2 days ago $40.65 earnedThe Billion-Dollar Lie Behind OnlyFans “Empowerment” (Her Testimony Will Shock You) | part II

56.9K61 -

1:06:09

1:06:09

Man in America

19 hours agoExposing HAARP's Diabolical Mind Control Tech w/ Leigh Dundas

80K85 -

1:47:16

1:47:16

Tundra Tactical

15 hours ago $114.95 earnedGlock Interview From Beyond The Grave//Whats the Future of Home Training??

65.2K12 -

2:16:35

2:16:35

BlackDiamondGunsandGear

13 hours agoEBT Apocalypse? / Snap Down SHTF / After Hours Armory

33.6K13 -

14:05

14:05

Sideserf Cake Studio

1 day ago $19.88 earnedHYPERREALISTIC HAND CAKE GLOW-UP (Old vs. New) 💅

73.8K14 -

28:37

28:37

marcushouse

1 day ago $12.96 earnedSpaceX Just Dropped the Biggest Starship Lander Update in Years! 🤯

42.2K19 -

14:54

14:54

The Kevin Trudeau Show Limitless

4 days agoThe Hidden Force Running Your Life

129K28